In our previous article Achieving DevSecOps using Open-Source Tools we explored what “DevSecOps” really meant and how that can be achieved using simple Open-Source tools integrated into an existing DevOps pipeline orchestrated with Jenkins and deployed on docker in an ad hoc on-premises architecture.

In this article Rohit Salecha and Anand Tiwari explain how DevSecOps can be achieved for an environment which is completely operated on AWS and their native offerings.

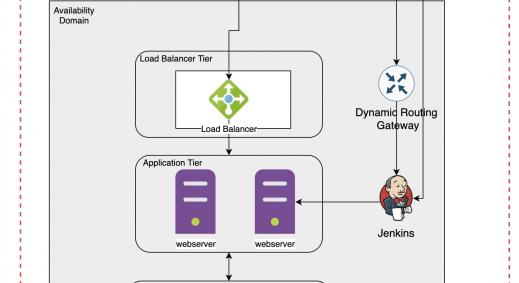

Before jumping on to DevSecOps let’s list down an all native DevOps environment in AWS.

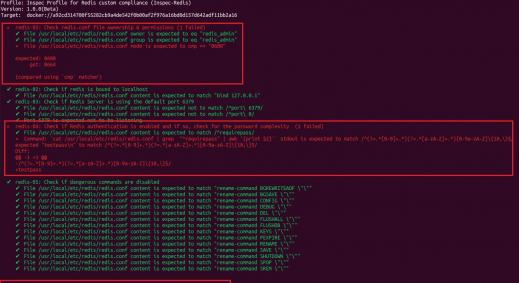

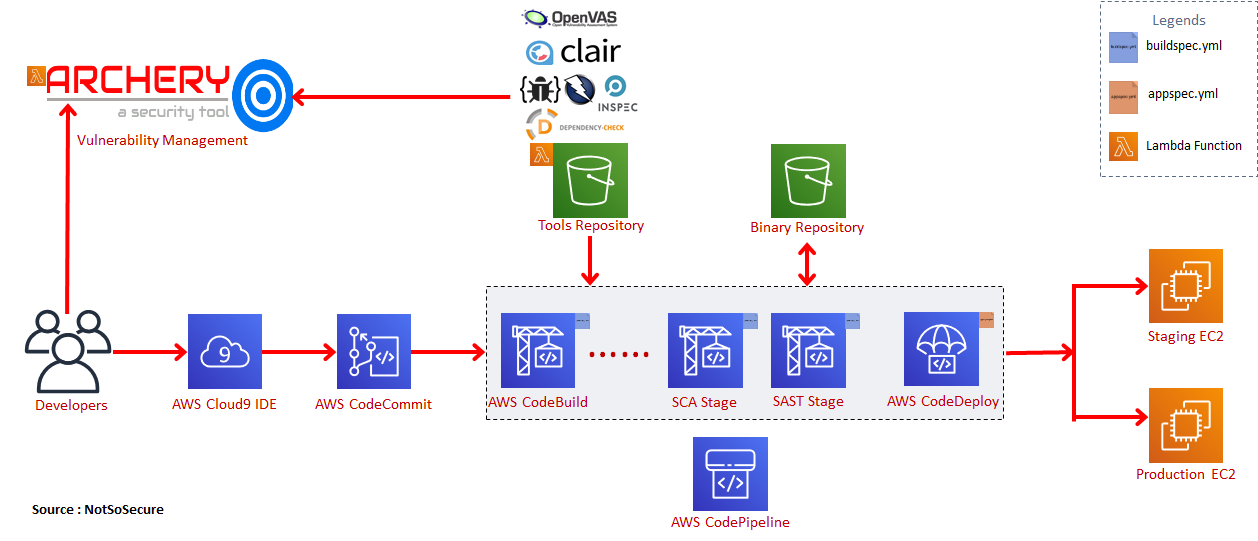

Illustrative DevOps on AWS

The above diagram illustrates the various native AWS services that can be used for CI/CD automation on AWS

AWS Services Detailed:

- Developers use the AWS Cloud9 SDK to write code. AWS Cloud9 is a cloud-based integrated development environment (IDE) that lets you write, run, and debug your code with just a browser. It includes a code editor, debugger and terminal with customizable plugins.

- Developers commit their code to AWS CodeCommit. AWS CodeCommit is a fully-managed source control service that hosts secure Git-based repositories. It makes it easy for teams to collaborate on code in a secure and highly scalable ecosystem.

- AWS CodeBuild is a service where developers can compile their source code , perform integration from multiple SCMs and create build artifacts. It is configured using “buildspec.yml” file where all instructions related to integration,compilation and build are declared. It provides pre-packaged build environments for popular programming languages and build tools such as Apache Maven, Gradle, and can also create a customized build environment.

- AWS S3 buckets are used for storing the final build artifacts/binaries.

- AWS CodeDeploy is a service that pulls the binary artifacts from S3 buckets and deploys them in pre-provisioned AWS environments like EC2, ElasticBeanstalk and ECS. CodeDeploy is fully configurable using the “appspec.yml” file.

- Finally, we have the AWS CodePipeline which orchestrates the various builds and deploy stages defined in CodeBuild and CodeDeploy giving us a fully managed continuous delivery service.

Integrating Security in AWS

AWS provides a lot of security tools in native cloud format, however, those tools generally focus more on monitoring and reporting, thus, requiring us to deploy and use and our own tools to address other areas.

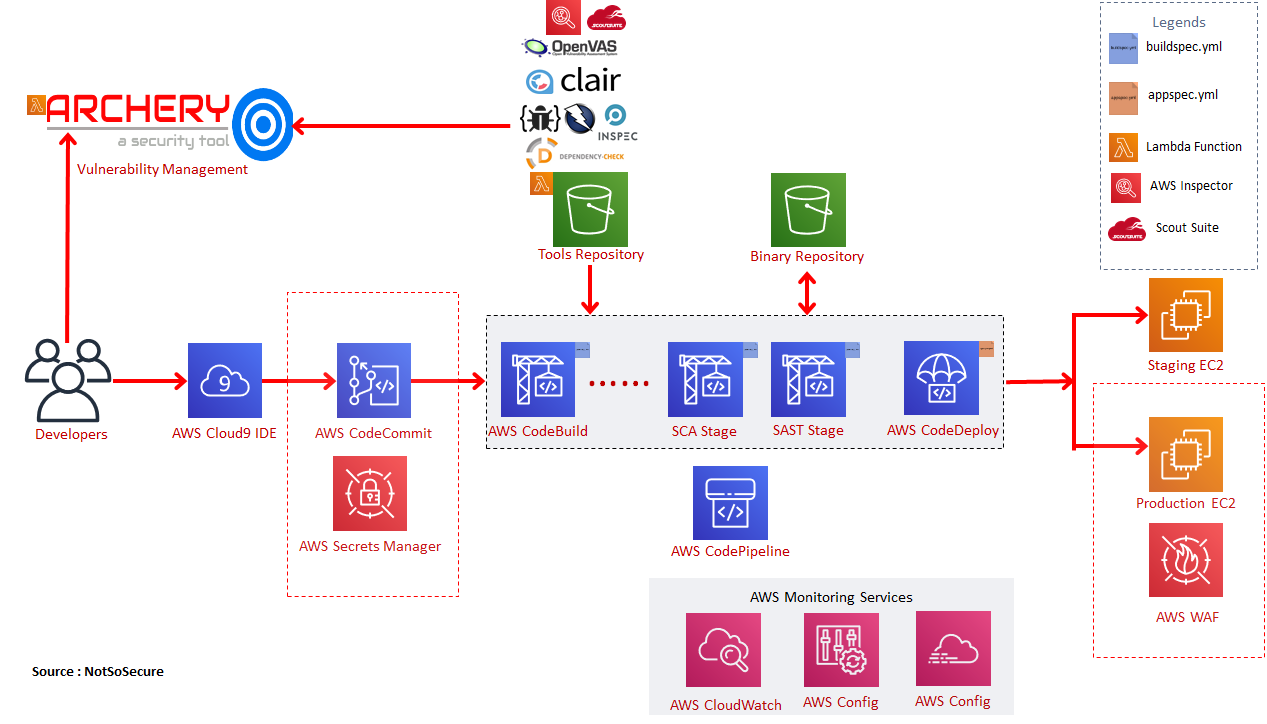

Integrating security in an AWS environment will take similar steps as required in an On-prem environment like integrating a code analyser tool in SAST (static application security testing) or a dependency checker in the SCA (software composition analysis) as also seen in the previous post.

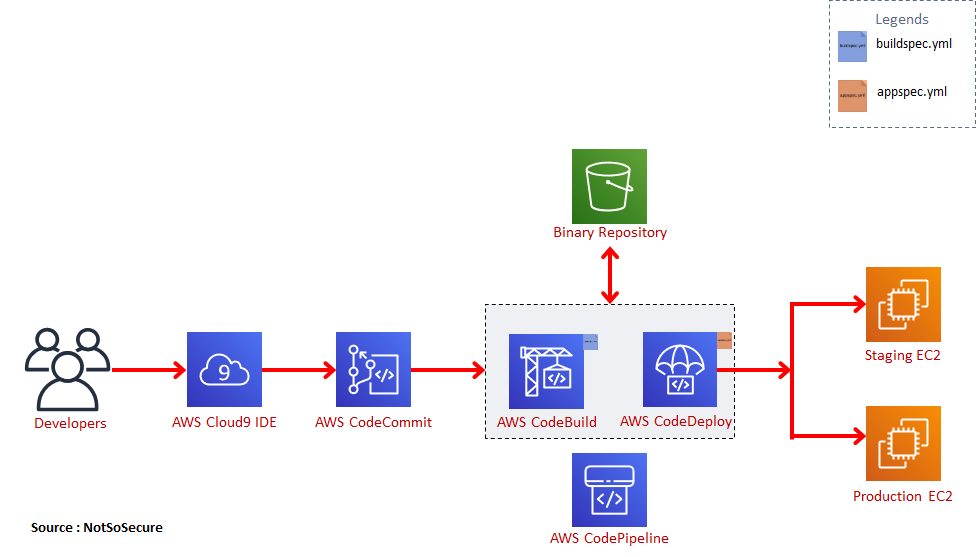

The configuration of various tools in AWS environment for execution will be different.

In an on-prem model tools can be downloaded at will and executed in a script or a docker container. However in cloud, as billing is generally on usage, download incurs extra charges and hence the strategy with using these tools in AWS would be to create a tools repository using an S3 bucket and referencing this S3 bucket in each “buildspec.yml” file. Each stage will have its own “buildspec-<stage>.yml“ wherein the tools would be fetched from the internal S3 bucket and executed. Below is a sample “buildspec-sca.yml” file for the SCA stage performing dependency-checks.

These tools will be needed to be updated regularly for which we can employ a Lambda function which would periodically check for new versions.

If the tool is compatible with the AWS Lambda specifications, then converting the tool as a Lambda service would be an exceptionally great task and for vulnerability management that is exactly what we have done. We have deployed Archery as a Lambda service which is activated only when a tool requires to push vulnerability data into it.

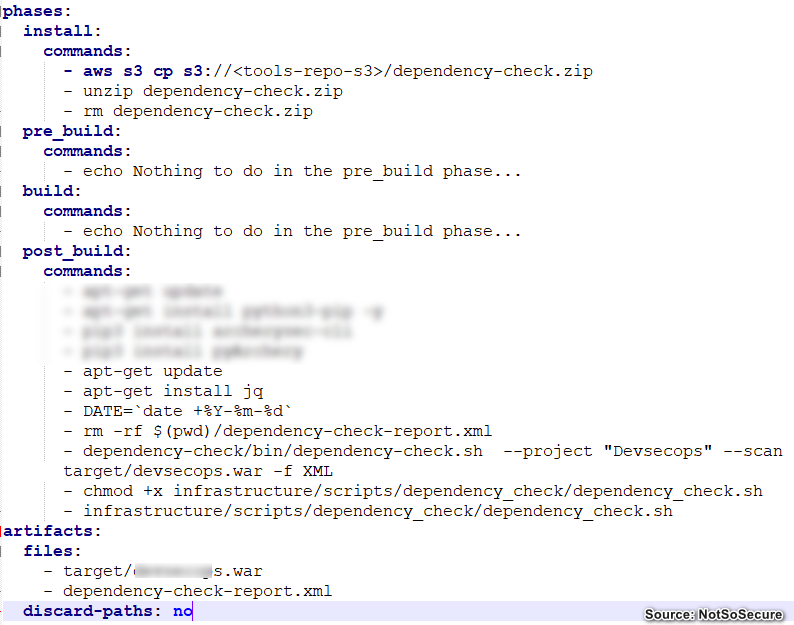

The below diagram is an illustrative example of an AWS DevSecOps pipeline.

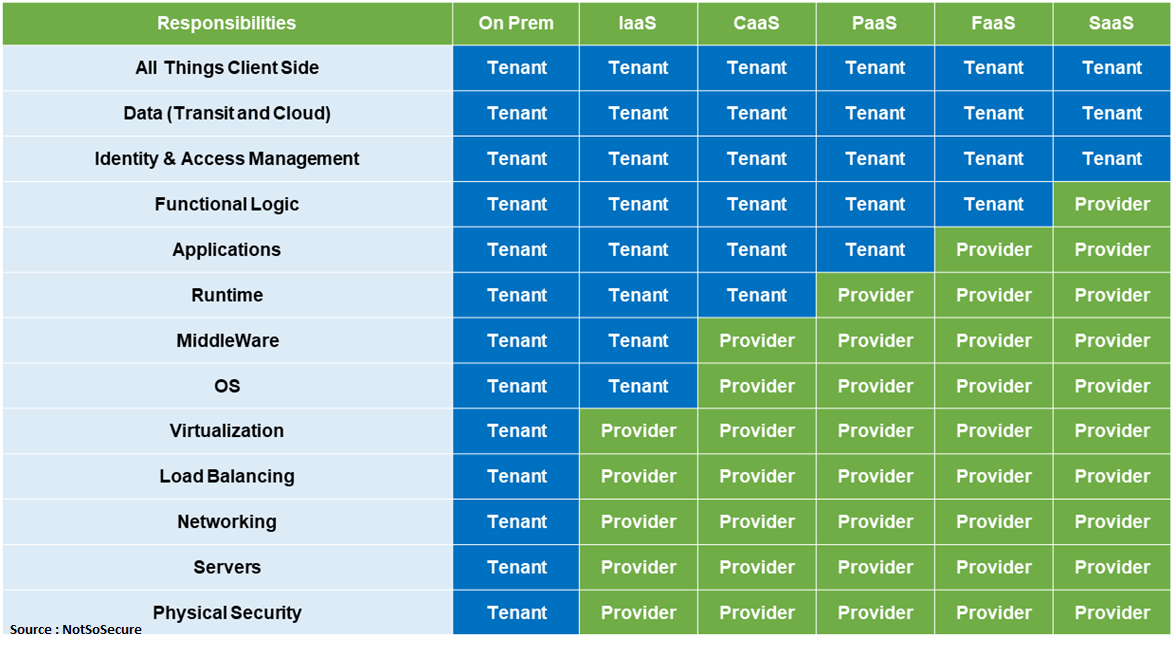

Due to the shared responsibility model (shown below) a large chunk of responsibility lies with the service provider, however, there are a few areas which remain with the tenant. In all essence most of the vulnerabilities associated with the environment would tend to originate from misconfigurations.

In addition to these security misconfigurations there are other issues associated with AWS services like AWS access key compromise and exploiting billing caps. Let us understand the complete AWS Threat landscape in more detail.

AWS Threat Landscape

Below are the issues describing the complete AWS threat landscape

Access-Control Security Misconfiguration

In AWS, access control is managed through the IAM Policies. A policy is an object in AWS that, when associated with an identity or resources, define their permissions. If an EC2 object needs to access an S3 bucket, specific permissions are needed. An oversight or granting of excessive privileges can lead to various scenarios where S3 buckets have public access leading to a possible leakage of sensitive information. A curated list of all S3 Bucket leaks can be found here https://github.com/nagwww/s3-leaks

Another instance was where a single administrator user was configured to access all the AWS resources which upon falling in the wrong hands lead to complete wipe out of that company.

These issues arise due to lapses in access control policies and permissions.

It is extremely necessary to regularly check for the permissions assigned to each resource.

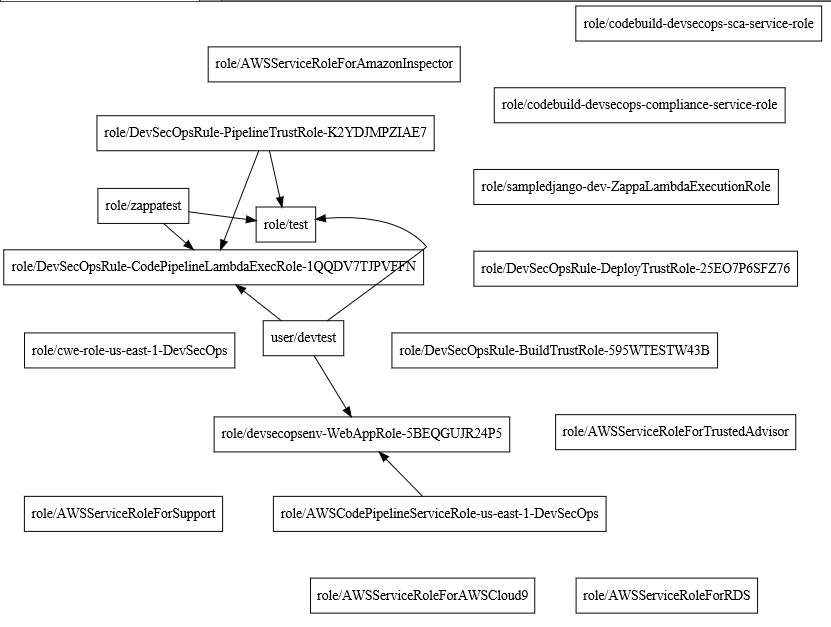

PMapper tool, a utility that enables users to view IAM permissions in AWS, can be utilised to provide a graphical representation of all the permissions assigned to different resources and users. We ran this tool on our AWS DevSecOps lab and the graphical result is shown below.

Other than that , AWS S3 Inspector is a tool which can be easily integrated into your DevSecOps process which would check and alert if any S3 bucket in your account is having a public read/write access.

Below user guide consists of best practices recommended by AWS. This is worth practising.

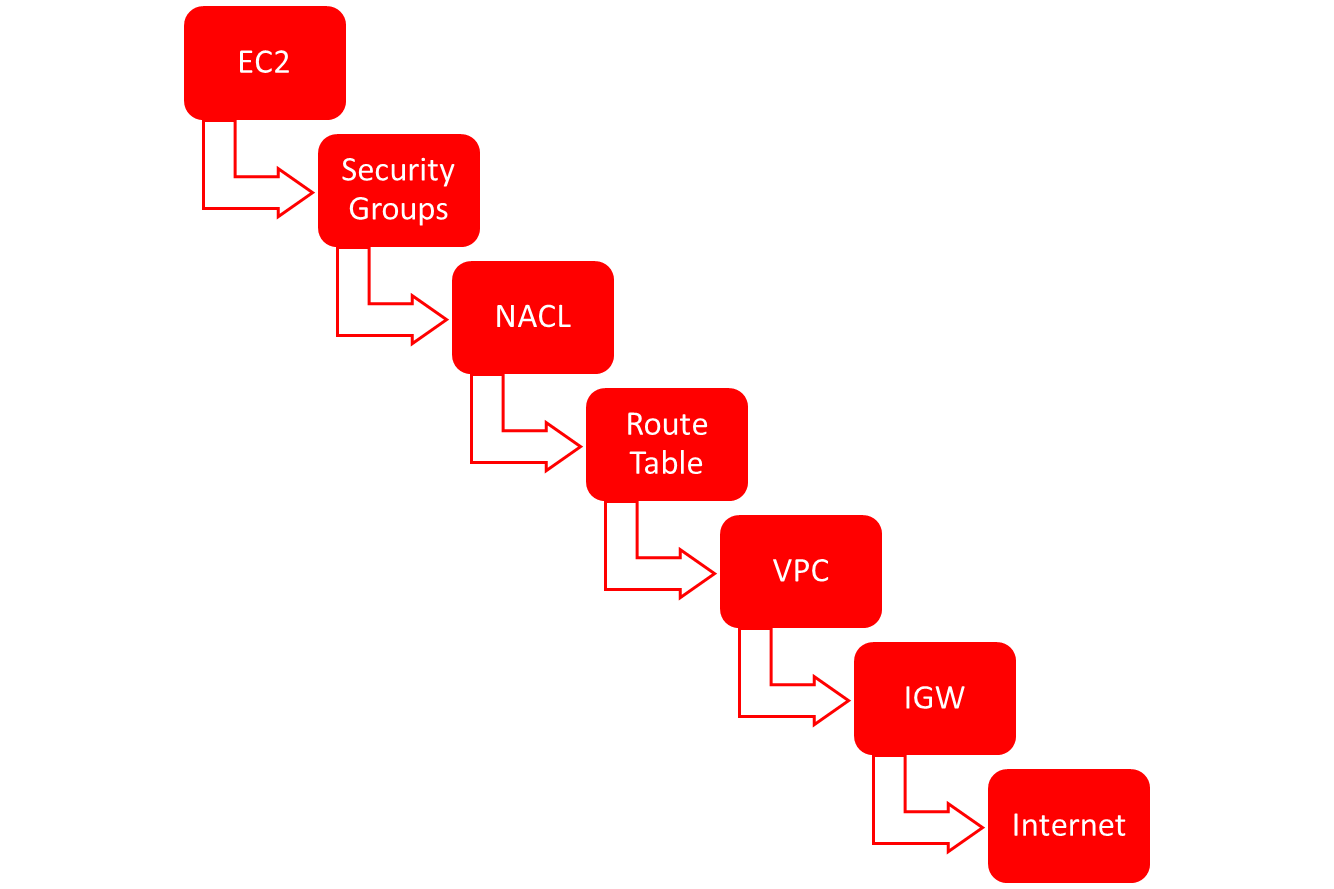

Network Security Misconfiguration

The diagram below shows a typical flow of network traffic and the different places where it can be tapped. Security Groups are like host-based firewalls for individual EC2 instances. Network Access Control Lists (NACL) can be configured for a group of EC2 instances. Route Tables are configured for routing traffic amongst different networks and finally we have VPC’s which are the final overarching network segmentation module connected to an Internet Gateway.

It is very much possible to have misconfiguration in such complex infrastructure. For Ex: A ThreatStack report states that of all the companies they analysed, 73% of companies had SSH port open over the internet.

Securely managing the configuration issues be it network configuration or permissions is not something that can be done with a click of a button. It requires mix and match of different tools and dedicated resources. Below section focuses on various tools offered by AWS as well as some other open source tools that can be utilized.

AWS Security Tools and Services

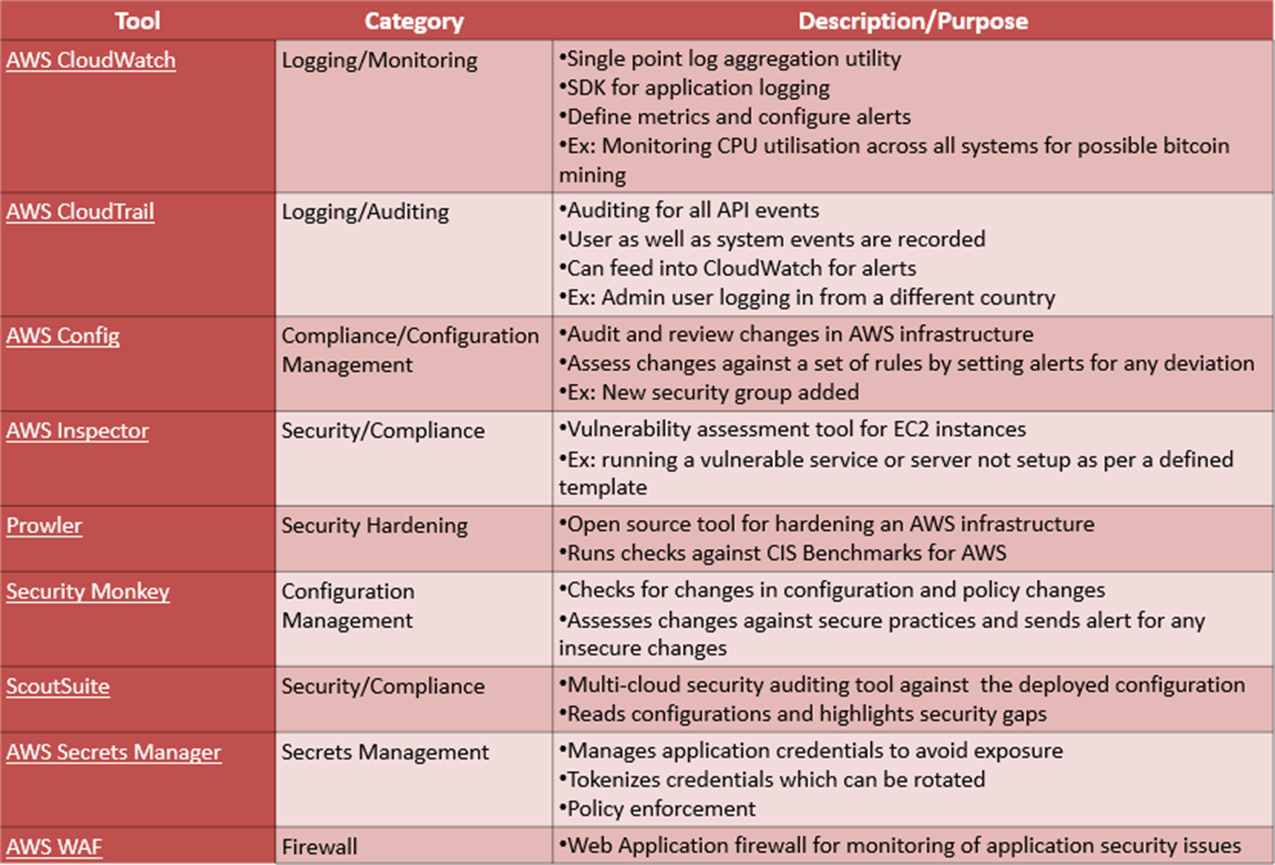

Below table describes various tools and their purpose.

Updated Illustrative AWS DevSecOps Diagram

AWS IAM Keys Leakage

In AWS if an EC2 instance needs to access an S3 bucket, proper IAM roles needs to be configured. IAM roles are permissions between AWS services which are different than user permissions. Hence if a service needs programmatic access to AWS resources then it needs a set of AWS access keys which can be obtained from this metadata URL https://169.254.169.254/latest/meta-data/iam/security-credentials

There are two ways in which these keys can be leaked/exposed

- If an application deployed on an EC2 instance is having an RCE or an SSRF vulnerability then an attacker can request the URL, obtain the AWS Access Keys and gain access to all available AWS resources. We have written an extensive blog explaining this attack vector.

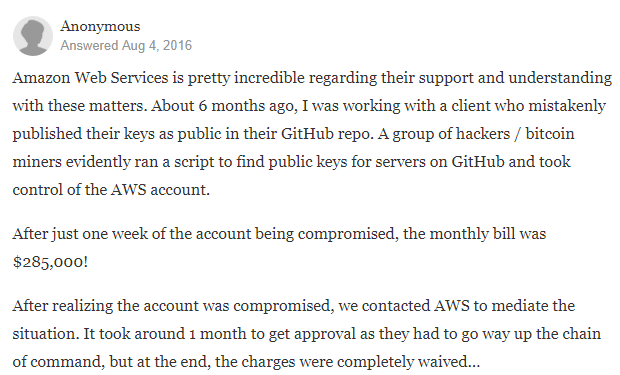

- Accidentally committing source code containing the AWS Keys, exposed in publicly accessible folders etc.

AWS IAM keys leakage can be prevented by employing pre-commit hooks like Talisman which detect for such information before developers can commit changes into a repo.

Alternatively, tools like truffleHog could be employed periodically to check for any exposed credential in a code repository.

Some best practices around protecting the AWS access keys can also be employed like rotating the keys, deleting previously used keys, limiting access to resources etc.

Exploiting Billing Caps

If your AWS keys are compromised the very first thing that attackers would do is setup EC2 instances and start bitcoin mining.

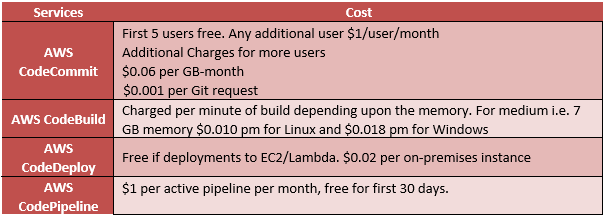

Not just that but let’s consider the cost of running DevOps on AWS

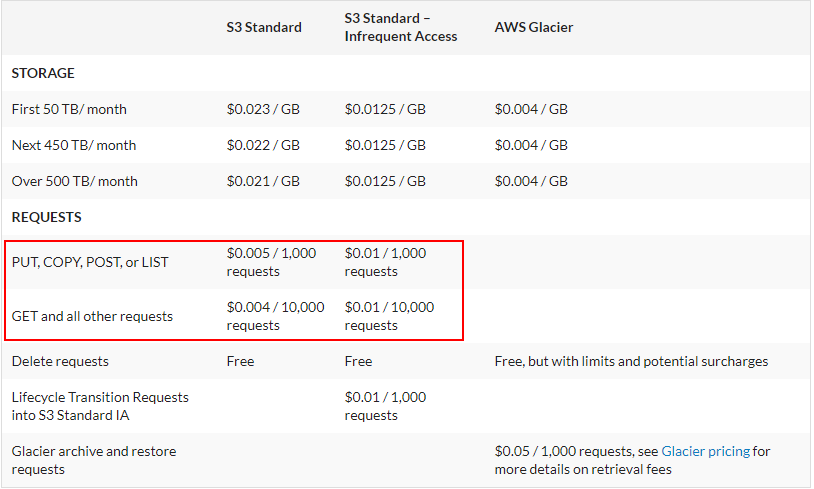

Here is the cost breakdown of using S3 Buckets.

The key point to understand here is that it is not just storage costs, but also “Per Request” costs that need to be considered.

One of the best practices while using AWS is to create billing alerts for your AWS accounts so as to protect yourself from being overcharged.

We have reached a point where we have implemented most of the security controls in the DevOps pipeline, leveraging most of the cloud native features provided by AWS and augmenting them with open source tools where required. This can help cloud native organizations to get a better understanding of how DevSecOps can playout in their environment. This obviously is not the only solution and different organizations can tweak this to their own preferences. Also cloud service providers can come up with new services in the future which can negate the need of open source solutions.

Checkout our Video giving a running snapshot of our discussion on the blog.

Marketing

We cover this as well as on premise solution in much more detailed format in our DevSecOps class Devsecops/. If you or your organization is interested, feel free to contact us for in house trainings or attend our upcoming public classes details of which can be found here Upcoming DevSecOps Courses

We also provide bespoke consultancy to help organisations adopt DevSecOps practice. To find out more please get in touch: